How to integrate Siri Shortcuts with your app — and why you should

(Editor’s Note: ArcTouch principal engineer Bruno Pinheiro contributed to this article.)

Voice as an interface is one of the top mobile trends for 2018. And despite the buzz around augmented reality and personalized emojis, Apple’s introduction of Siri Shortcuts at the recent Worldwide Developers Conference (WWDC) may just have been the most important new development for the future of mobile.

A little Siri and voice history

Siri, of course, isn’t new. Apple’s helpful voice assistant has been in iOS since 2011. But it wasn’t until 2016 that Apple gave app developers access to Siri with the first-generation SiriKit framework. As our Pedro Costa wrote back then, SiriKit was limited to seven types of applications. And last year, SiriKit was incrementally improved — offering app developers the ability for the first time to allow transactional features through voice control (e.g. a banking app could support bill payment or money transfer through voice commands).

Meanwhile, the way that we, as consumers, have been using our voices to interact with content and apps has changed dramatically over the past few years. You can thank Amazon for much of that. Alexa, which lives in Amazon’s Echo line of smart speakers and a growing number of smart home devices, has helped voice-driven apps go mainstream. Google has followed with Google Home, which in combination with the Google Assistant has encouraged more developers to build voice-driven applications. And even on our phones, we are now using our voice more often to do searches, dictate messages, and access apps.

Enter Siri Shortcuts, which will be an enabler for two different trends in mobile. First, it offers developers an easy way to expose their apps to Siri and voice interactions. Second, it allows apps to offer up small snippets of key information — similar to what Google refers to as micro-moments — based on user context. And with support for iOS, watchOS and macOS based apps, Siri Shortcuts can enhance the experience for a broad set of experiences.

What the Siri Shortcuts user experience looks like

Siri Shortcuts, as the name suggests, is a shortcut to the information users value most in your app. These shortcuts are displayed as cards, which are the result of any Siri search that relates to your shortcut. Apple suggests only integrating Siri Shortcuts in your app for different user actions that are repeated often — and we agree.

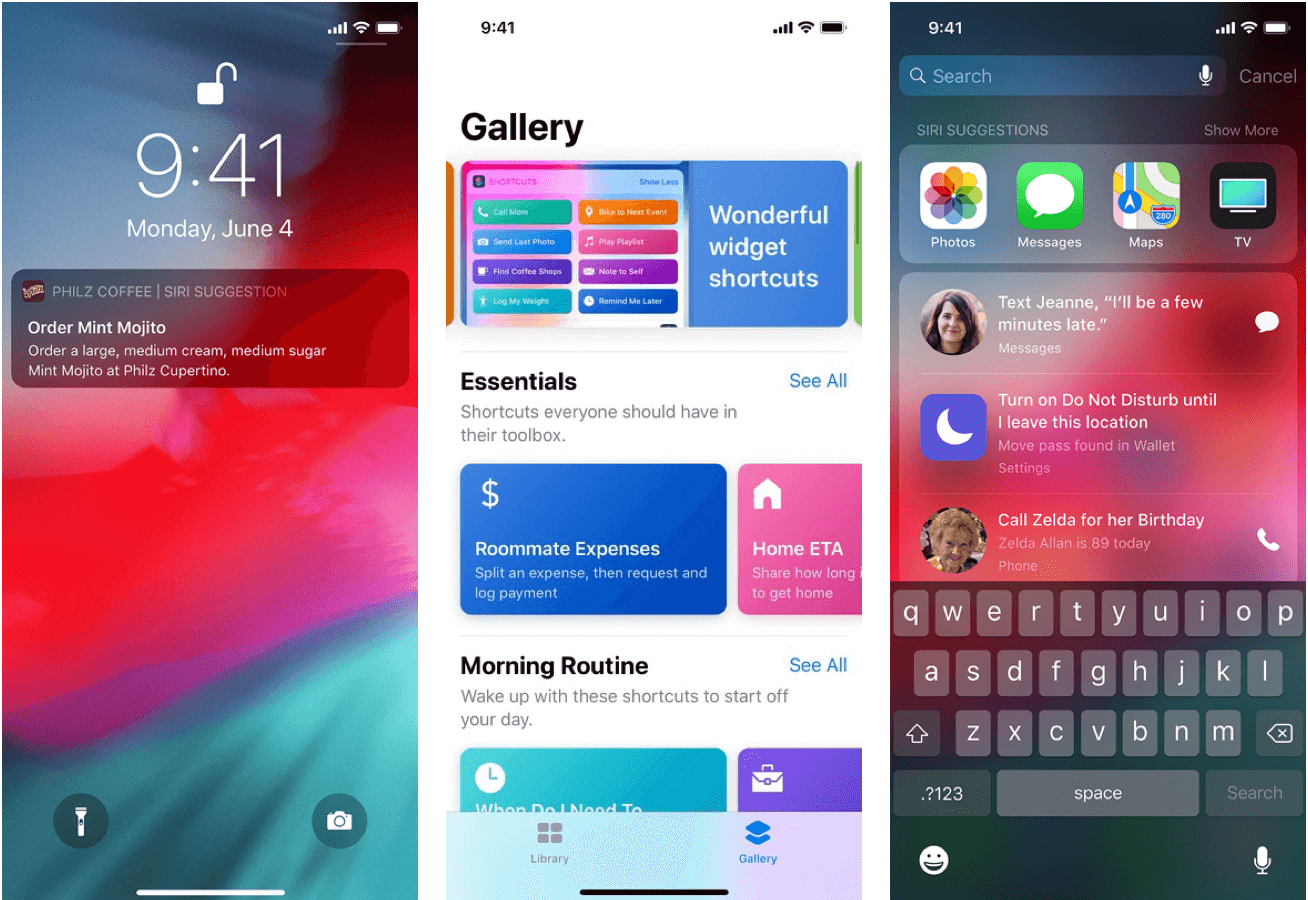

Siri Shortcuts allow app developers to show contextual information from their apps in different iOS system screens (as shown above). SOURCE: developer.apple.com

If you integrate Siri Shortcuts with your app, you can:

- Suggest to Siri actions to display in the future that users can perform in your app.

- Provide users the ability to add shortcuts directly from your app user interface.

- Allow users to choose voice phrases for different actions in your app within the Siri Shortcuts app, which will be available when iOS 12 is released in the fall.

From a developer’s perspective, we’ll need to identify possible repetitive flows and start telling Siri about those actions. This act of your app telling Siri about a shortcut is referred to by Apple as “donation” — and it is done by calling a new set of APIs in SiriKit every time the user performs an action that is likely to be repeated.

For example, let’s say you have a pizza ordering app. You enable a shortcut that makes it possible to order pizza directly from Siri. After you notify Siri about this action, Siri will looking for patterns in your user’s activities, taking into account how and when they use your app to order pizza with characteristics like:

- Date and time

- Location

- Patterns like pizza size, toppings, etc.

Based on those parameters, Siri will choose the best time to suggest the use of that shortcut. If the user regularly orders pizza at 8 p.m. on Fridays, Siri may proactively show that shortcut at that time. Or, users can use the Shortcuts app to trigger a response to a phrase like: “Friday dinner.” From that moment on, the user will be able to trigger that shortcut by saying “Hey Siri, Friday dinner.” Siri will ask for a confirmation from the user and will place the order. If there are still missing pieces of information (the shortcut doesn’t have all required fields for placing an order), the shortcut can open the app and pre-populate the order with the known items, and allow the user to complete the transaction from there.

With the Shortcuts app, users can define phrases that trigger multiple shortcuts. For example, they can create a shortcut which will be invoked with, “Hey Siri, let’s relax,” and it could trigger:

- A home automation app to dim the brightness of lights

- A streaming app to turn the TV on, and start a particular show

- A pizza app to order a pepperoni pizza

How to integrate Siri shortcuts into your app

Being a top app development company and Alexa skill developers, we of course couldn’t wait to dig into the code and go through the process of integrating a Siri shortcut into an app. Here’s how we built a proof-of-concept shortcut for a pizza app:

There are two steps to implement shortcuts: by setting a property on NSUserActivity and by creating the intent. If you are already using NSUserActivity API in your iOS app, you can simply set it to this state:

userActivity.isEligibleForPrediction = true

Setting this property to true will enable Siri to start predicting users’ activities in your app.

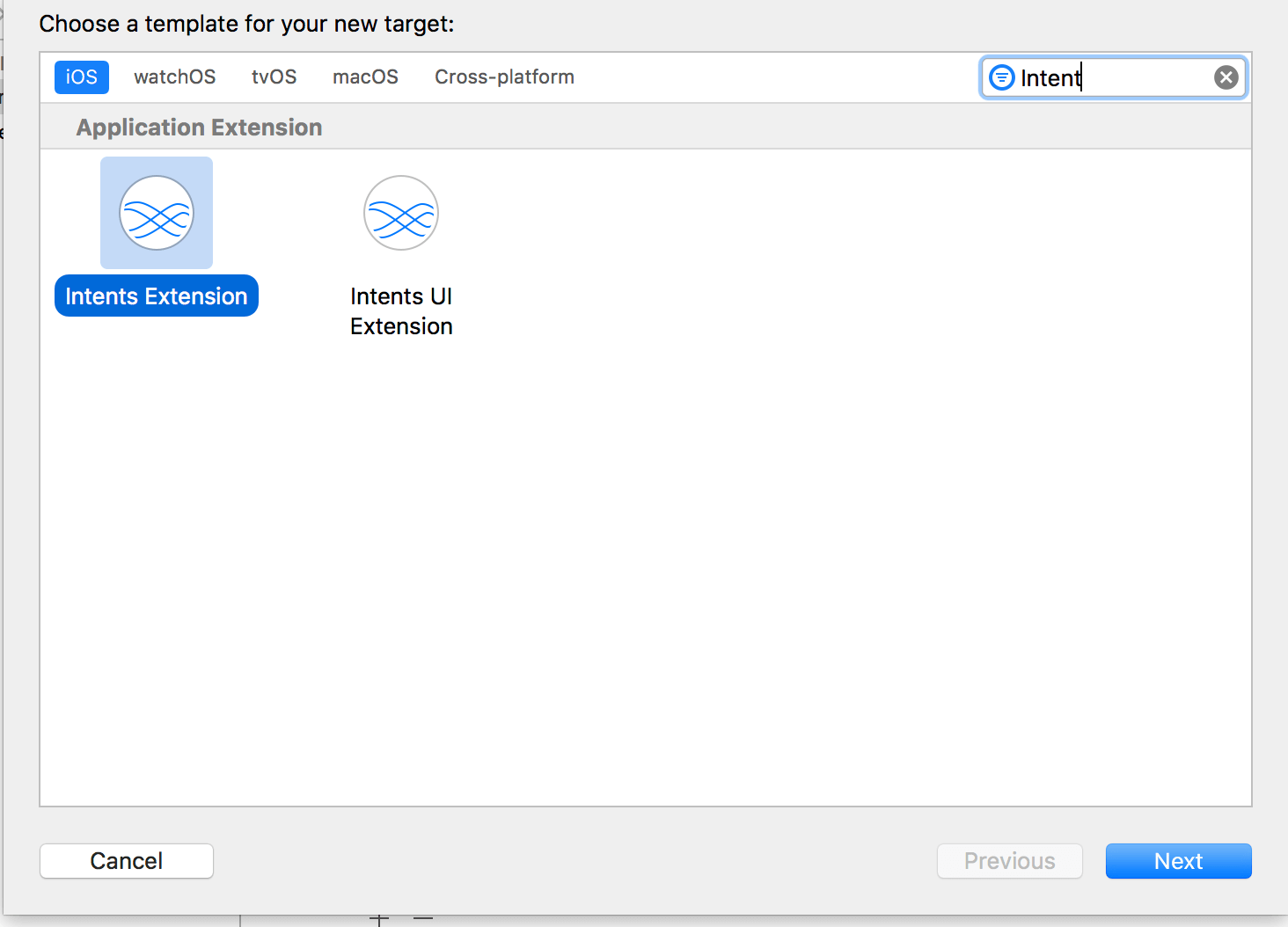

But we wanted to go a little further with Intents. Intents are also shortcuts but they are defined in a different way. The first thing you will need to do is create an Intent App Extension to your app:

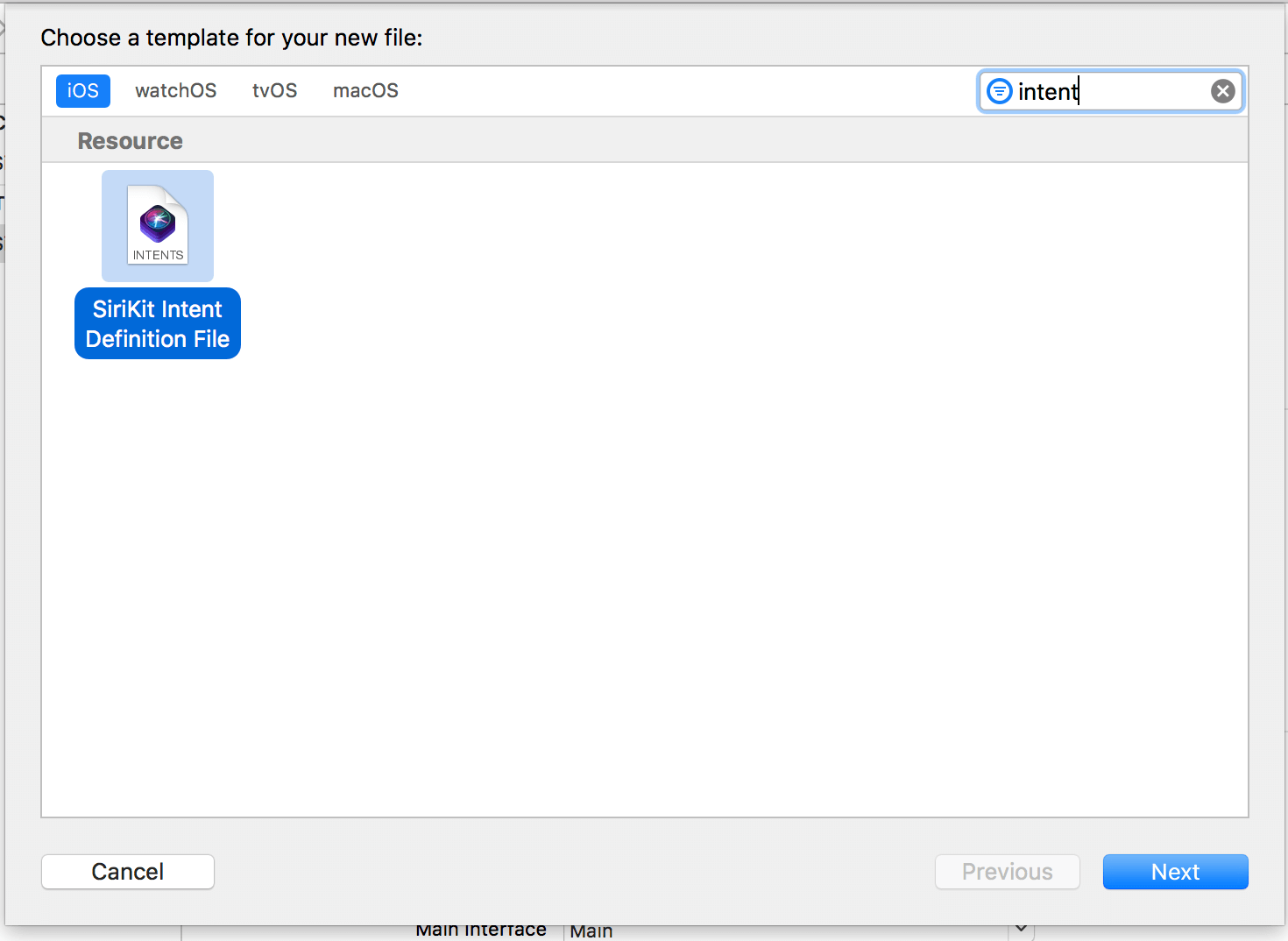

After that, we’ll need to create a New File of type SiriKit Intent Definition File, like this:

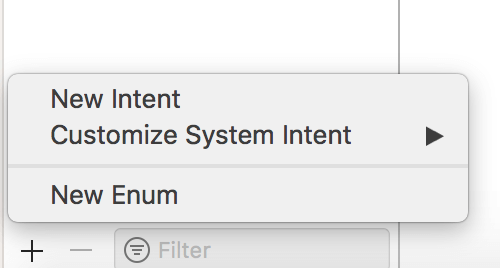

After creating a definition file, you can start creating your Intents by simply clicking on the “+” sign and after that, choose New Intent.

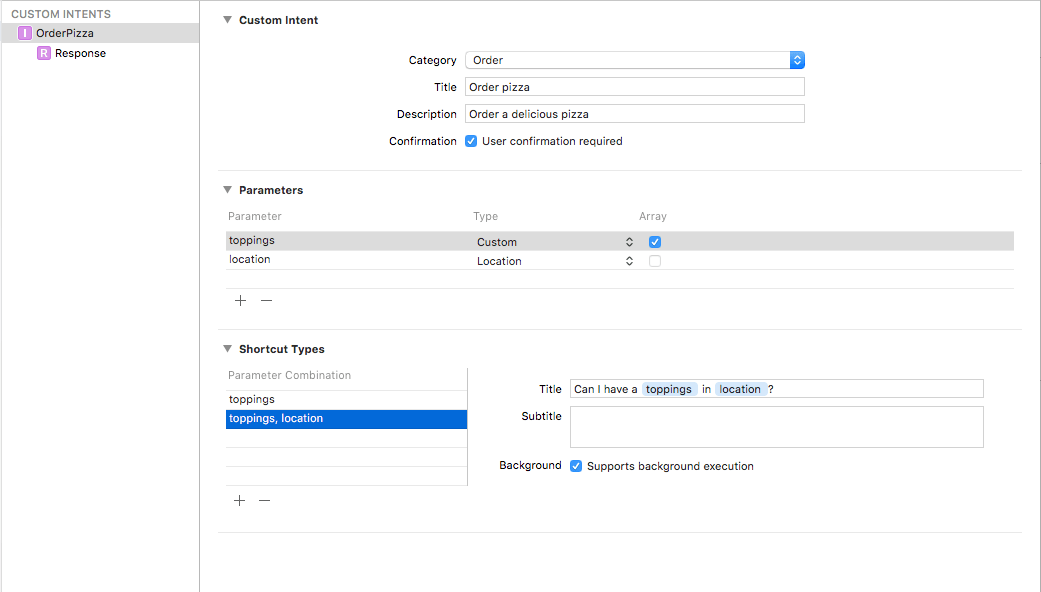

After that, we’ll be able to set the properties of that Intent, including:

- Category

- Title

- Description

- The parameters and its types

- And, the Parameter Combination:

The parameters combination is really important because Siri will search on these combinations to trigger the actions in your app.

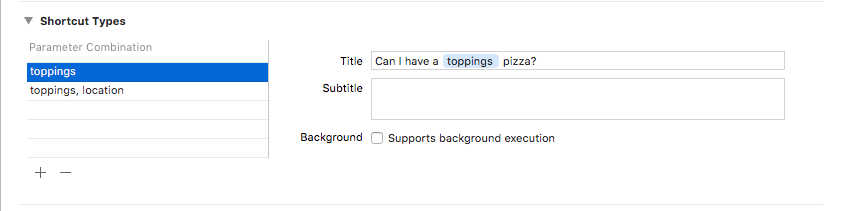

In this image, we can see two possible shortcut types: one with both toppings and location parameters, which can be executed in the background since we have all the information we need to order a pizza, OR just when we have the toppings.

In the case when we only know toppings, the order cannot be executed in the background because we still have information we need from the user. Other variables you may want to account for would be:

- Sometimes users want pepperoni pizza to be delivered to a home

- Sometimes users want to pick up the pizza

In both cases, Siri can start the transaction by summoning the shortcut, but the user can complete the purchase in the app.

Given this example, the most possible information that Siri would suggest is the second combination of parameters where only the toppings were defined.

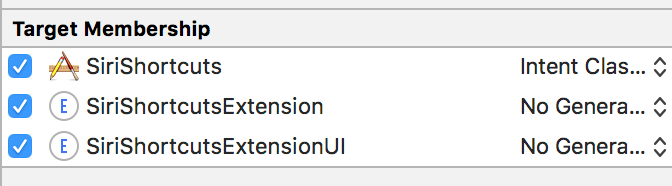

After configuring your Intent, make sure that you are only generating the Intent classes for the targets in which you will use them. This is important because if you generate the classes for multiple targets, you’ll probably have classes name conflicts and duplicated code.

Now we need to write the code to call Siri APIs to “donate” this Intent — so that Siri can suggest the Intent to our users. This is how we donate an Intent:

func order(pizza: Pizza, to location: CLPlacemark) {

let intent = OrderPizzaIntent()

intent.toppings = pizza.toppings

intent.location = location

let interaction = INInteraction(intent: intent, response: nil)

interaction.donate { error in

guard error == nil else {

print("Problems donating your Intent")

return

}

print("Intent donated")

}

}

The OrderPizzaIntent class is auto-generated by Xcode. We usually do this at the end of the process. In this case, we do this when a user is about to finish their order. But how do we handle the suggested Intents by Siri?

With Xcode, you can auto-generate your classes with the OrderPizzaIntentHandling protocol.

You can implement that protocol on IntentHandler.swift class on Intents Extension:

/// OrderPizzaIntentHandling

extension IntentHandler: OrderPizzaIntentHandling {

func handle(intent: OrderPizzaIntent, completion: @escaping

(OrderPizzaIntentResponse) -> Void) {

let response = OrderPizzaIntentResponse(code: .success, userActivity: nil)

// Call your API here

completion(response)

}

}

You can write more of your responses and add intents to them using the .intentdefintion file, and they will be automatically generated for you.

So now you’ve defined your background intents — but what about the other intents that will lead your users to the location screen?

You can add a method to your AppDelegate to handle this:

func application(_ application: UIApplication,

continue userActivity: NSUserActivity,

restorationHandler: @escaping

([UIUserActivityRestoring]?) -> Void) -> Bool {

userActivity.isEligibleForPrediction = true

if let intent = userActivity.interaction?.intent as? OrderPizzaIntent {

router.goToChooseYourLocationScreen(intent)

return true

}

return false

}

That’s it. In just 20 minutes, you can start to integrate Siri Shortcuts in your app. Now that’s faster than a pizza delivery!

What can we expect in the future for Siri Shortcuts?

The complexity of the voice interaction with Siri Shortcuts is still somewhat limited, as you might expect from this first version. For example, with our pizza app: Users can’t say a longer phrase to make a more specific order. If you say, “Order a pizza with coke” or “Order a pizza with orange juice,” Siri will display the expected shortcut for the phrase “order a pizza” only.

So, from an app developer point of view, it’s important to think in terms of very simple interactions, primarily verb/noun combinations. Using Siri Shortcuts to start a transaction is definitely a win — it still saves the user a lot of headache in finding and launching the application on their phone. And it can be a quick entry point to your app — where the user can then complete more complex transactions.

At a time when we, as consumers, are expecting to do more with our voice-powered digital assistants, it makes sense for the vast majority of apps to add support for Siri Shortcuts.

________

Thinking of adding Siri Shortcuts to your app?

If you’d like to know more about how you can use Siri Shortcuts in your app — or if you’re wondering if your app is ready to take advantage of all the new enhancements in iOS 12, we can help. Contact us to set up a free consultation — our iOS app experts will share feedback on your ideas and needs, no strings attached.