Decoding SiriKit: What You Can and Can’t Do with Siri in Your App

This year at WWDC, Apple senior vice president of engineering Craig Federighi introduced SiriKit, the Siri SDK that now allows developers to build support for Siri into mobile apps. It’s been expected for a while now — and we couldn’t be more excited about it.

Of course, given the implications of tying our client’s apps into Siri, we were anxious to better understand just what we could do with the first-generation SiriKit, which is now available as a developer preview in the Xcode 8 beta.

The first thing I learned is that Siri still isn’t quite as open as we’d hoped — as a developer you can build support for seven types of applications with SiriKit:

- Audio and video calling

- Messaging

- Payments

- Searching photos

- Workouts

- Ride booking (e.g. Uber)

- Climate and radio control for CarPlay.

What you can and can’t do with SiriKit

Payments are an obvious and great use case for building app support with SiriKit — and would work with virtually any app that includes an ecommerce component. Imagine you are in a circle of friends and everyone is chatting about how much fun you had at a party last night. In the middle of a conversation one of your friends reminds you that you owe him $10 for the late-night burrito. So, you grab your phone and say, “Hey Siri, send John $10 dollars for last night’s burrito using MyAwesomePaymentApp.” Siri does its thing, the transaction is completed, and you resume your conversation while your phone quickly goes back in your pocket. There’s lots of potential for ecommerce applications — and certainly some interesting use cases in the other six areas covered by this first generation SiriKit.

It’s also important for businesses and developers to understand the limitations at this stage. One use case the current SiriKit won’t support more advanced content search. We’ve done a lot of work with media companies like NBC, CBS and ABC — and it would be great to be able to use SiriKit to search the text of stories and return results inside the Siri interface. But for now, users must still go into the app and search the content directly.

Building a proof of concept SiriKit app

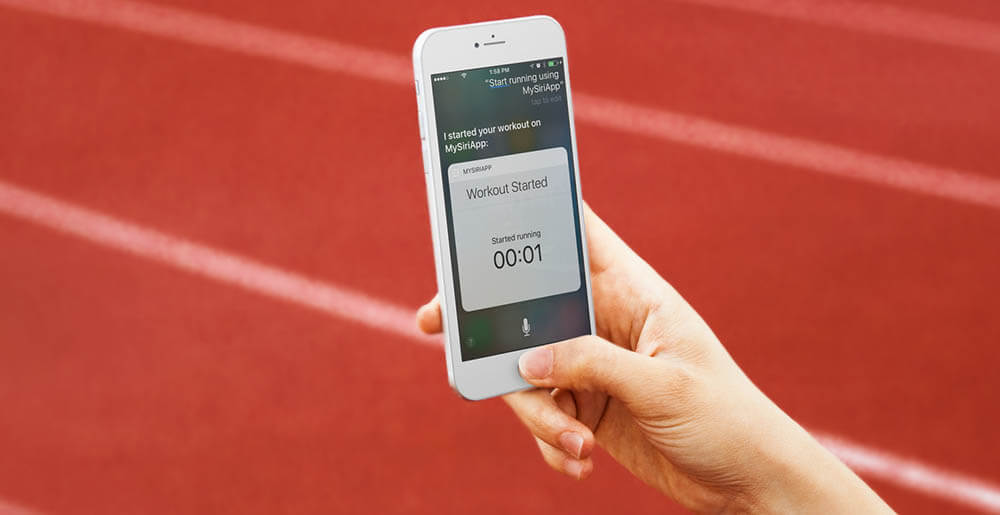

Ok, enough about use cases. I couldn’t wait to dive into SiriKit and find out how difficult it would be to integrate into an app. So, I created a basic exercise app that could start and end workouts triggered by voice commands coming from Siri.

Ok, enough about use cases. I couldn’t wait to dive into SiriKit and find out how difficult it would be to integrate into an app. So, I created a basic exercise app that could start and end workouts triggered by voice commands coming from Siri.

The SDK works through what Apple refers to as Intents. An intent is an object that represents the user’s intention and it contains user-specified information such as a type of workout and if the user is starting or ending the workout. The lifecycle of a intent can be defined in three steps: Resolve, Confirm and Handle.

Resolve is how your app will help Siri understand the values that the user provided. You can provide information to help Siri make next decisions, and let Siri know if the interaction was successful or if you need extra info, like a confirmation or disambiguation.

Confirm is where you report to Siri if your app has all requirements to complete the intent provided. In this step, you let Siri know the expected result and, if needed, any requests for a confirmation.

Handle is the final and most important step. Here is where your app performs the actual handling of the intent and reports back to Siri regarding successful completion of the task — or in some cases failure. Also, if your app needs more time to complete a request — for example, if there’s a slow network connection — you can let Siri know the task will continue even after the user ends the Siri interaction.

The first step to handle an intent is creating an Intents Extension. This will handle all the intents coming from Siri — and the extension handles what the intent contains. Your extension contains a Info.plist where you must define which intents are supported and which are restricted while in lock screen. The latter is a subset of your supported intents that should only be used while the device is unlocked. This is important because of privacy issues and to prevent unwanted users from using your private apps.

The code itself is straightforward: There is a series of protocols that you should implement in your extension to handle all possible scenarios. Also, your extension will handle the failure or success of the intent. For instance, if you have a running app, you might not allow users to say “Start my walk using MyWorkoutApp” but would allow users to say “Start my run using MyWorkoutApp.”

One important object that works behind the scenes is the NSUserActivity class reference, which helps your app understand user context. It was originally introduced by Apple to support Handoff in iOS 8 and OS X Yosemite. It lets users start an activity on one device and seamlessly resume the activity on another device — and now is part of SiriKit. NSUserActivity tracks the current user state and, with Siri, enables your app to know what actions the user took while engaging with Siri. It is important to note that NSUserActivity passes data between your app and the system; with NSUserActivity you also notify the iOS device about the actions that your app can perform — and it therefore can add new integrations with other applications.

For example, if you have a VoIP app, through NSUserActivity the device OS knows that your app can make calls and may show that option from within the contact information in the system’s Contacts app.

SiriKit’s IntentUI and INVocabulary

Hopefully, you now have a basic understanding of how Intents works and how to use it in your extension. There are two other important pieces to understand before you get started: IntentsUI and INVocabulary.

First, IntentsUI allows you to show custom content within the Siri interface based on actions and intents performed by the user. For example, you might want to show a chronometer that is associated with a user’s workout. Of course, Siri does not have the ability to access custom content on its own, so you have to enable it through IntentsUI.

Second, we have INVocabulary. This is a tool you can use to define specific terms that Siri might not understand naturally. As an example, if you have a user that considers weightlifting a workout, Siri doesn’t understand this and wouldn’t trigger your app when it hears the term. So, by teaching Siri that this is a valid term, it will respond appropriately when it hears it. But use INVocabulary with care — if you try to teach it common words that have one definition with your app but other definitions with other apps, you may ultimately confuse Siri and create a bad user experience for your users.

In my tests, keywords play a big role in Siri’s decision to trigger your app or not. As an example, you cannot say “Run on MyWorkoutApp” and expect MyWorkoutApp to launch. But using keywords like Start, Stop, and Pause are crucial to engaging with your app through Siri. Also, users must mention the app name somewhere in the phrase — or else Siri will default to iOS system apps and the web as it searches.

SiriKit: Breaking down app silos

SiriKit adds a whole new dimension to iOS development — by leveraging Siri’s magic within our own apps, developers can take another important step for improving user experience.

For years, apps have lived in silos, launched only by pressing icons on the phone screen. Recent improvements to iOS and Android have improved how apps coexist with the web and system level applications like email, calendar, messaging and more. And now with Siri open for developers, summoning your app through voice is another step toward breaking down the walls between apps and the rest of the mobile experience.

The first generation of SiriKit, which will officially launch in the fall, is pretty limited. But over time, it will be a big opportunity for developers and the businesses they represent.