Apps Will Determine Who Wins the Home Assistant Market

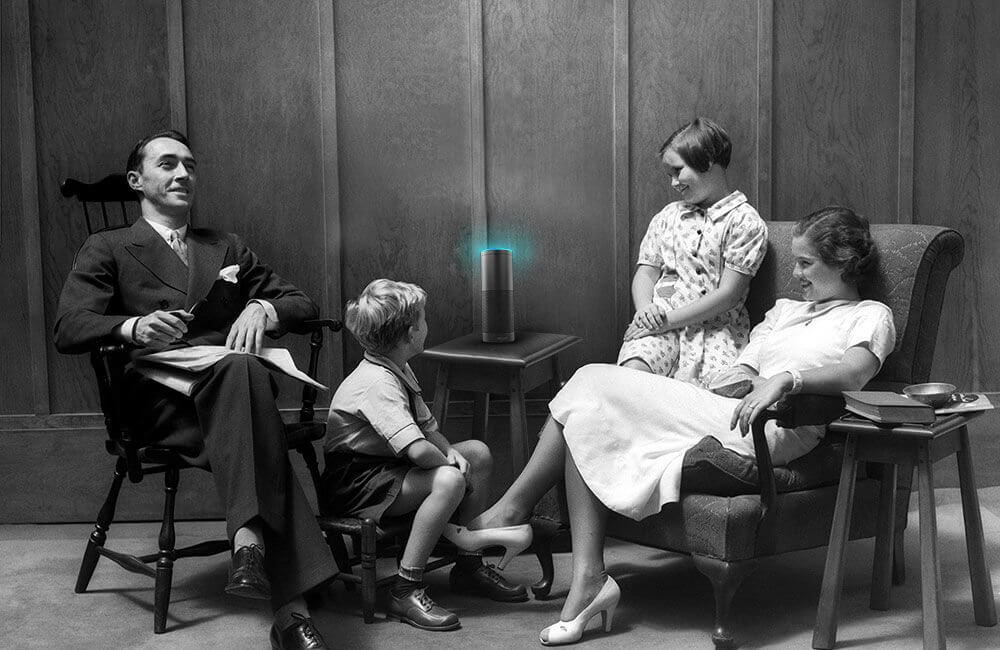

While the tech industry has been abuzz with talk about bots that live on messaging platforms, there’s a parallel evolution happening with a different kind of conversational app: voice assistants. It’s particularly interesting when it comes to the emergence of the home assistant hardware category.

As the tech heavyweights duke it out to have voice assistants at the center of the smart home, Amazon has taken a cue from past digital devices by making its voice assistant Alexa extremely developer friendly to encourage custom apps to be built for its platform. That fact alone might just help its first-to-market Echo device maintain a stronghold on the home assistant category.

Amazon launched the Echo home assistant, with Alexa on board, in June 2015 to a lot of raised eyebrows from consumers that simply didn’t get it. But the platform has slowly taken hold with early adopters that swear by Alexa’s ability to understand language, and with developers who have now created more than 1000 apps (or as Amazon calls them, “skills”). Amazon has since upped its big bet on the home assistant market by reportedly increasing its Echo/Alexa team size to more than 1000 dedicated workers.

Amazon launched the Echo home assistant, with Alexa on board, in June 2015 to a lot of raised eyebrows from consumers that simply didn’t get it. But the platform has slowly taken hold with early adopters that swear by Alexa’s ability to understand language, and with developers who have now created more than 1000 apps (or as Amazon calls them, “skills”). Amazon has since upped its big bet on the home assistant market by reportedly increasing its Echo/Alexa team size to more than 1000 dedicated workers.

Meanwhile, Google is about to launch its Google Home with its built-in voice assistant, and Apple’s Siri continues to mature on iPhone, iPad and the new Apple TV. There’s also Microsoft’s Cortana, which is mostly used on Windows phones but is also available on iOS and Android as a standalone app.

So, where is the market heading for these voice assistants, and who will own the home? Let me start by taking what seems like an enormous step back in time — 6 years.

The evolution of the Siri voice assistant

The category of voice assistants became relevant with the introduction of Siri in 2010. And no, I’m not talking about Apple’s Siri — I mean Siri’s Siri.

Long before it had a dedicated home in iOS, Siri started as a standalone company that created a first-of-its-kind voice assistant software. It was, at the time, an open platform that allowed developers to build apps and leverage Siri through third-party APIs. And for its time, Siri was able to interpret human language fairly accurately, efficiently help its master complete tasks without much of a learning curve, and it had a unique personality. Siri represented a major breakthrough in the category; no other product came close.

If Alexa’s a favorite of developers, then the software will follow. And if there is more software, it will appeal to more consumers.

When Apple acquired Siri, some in the industry considered it a setback. Yes, Siri usage exploded as millions of iPhone and iPad users had default access to Siri when iOS 5 was introduced in 2011. But Apple effectively closed the doors on integrations, stifling the potential for third-party applications that could have ultimately made Siri more valuable for users. Over the past few years, Siri has since grown incrementally more capable as Apple added new languages (Siri now is “localized” in 34 languages) and extended how it could access data on a user’s phone. And now, Apple is slowly opening Siri up to external developers — including with the recent introduction of SiriKit — but there are still many limitations to Siri’s reach and intelligence.

Meanwhile, Google Now and Cortana followed Siri, starting as task-oriented “assistants” with custom interfaces. But to date, they’ve mostly acted as voice-triggered web search engines, limited in part by their captivity on phones.

Amazon Echo and Alexa opened the door for innovation

When Amazon Echo with built-in Alexa was introduced in 2015, many consumers didn’t immediately understand the benefit of a home assistant. But developers and technophiles did, and Alexa was built in a way other Amazon services have been built (e.g. its Amazon Web Services cloud-based infrastructure) — as an open platform. It was a new category of device that third parties could use to create their own applications. Alexa on its own is not very powerful: It can do basic search and stats (i.e. weather, sports), play some multimedia content, and most importantly to Amazon, order things. But the potential consumer appeal of Alexa becomes orders of magnitude larger if more brands and businesses take advantage of its simplified SDK and create new capabilities.

During our annual hackathon in January, one of our teams built a proof-of-concept app that integrated our Pingboard powered employee directory, some location-sensing beacons that could track employees based on the whereabouts of their mobile phones, and Alexa. The hack solution, developed in two days, would allow us to ask Alexa, “Where is Eric Shapiro?” If our CEO was in the building, Echo/Alexa would tell us exactly where.

We had fun speaking with Alexa, and learned something very important: Building a multifaceted app that integrates with multiple services and Alexa was actually pretty simple.

Placing boundaries on natural language processing

Being open to third-parties is crucial for building momentum with other businesses and services. But Alexa also excels at understanding what users ask of it. At the core of its natural language processing capabilities is what the company calls Alexa Voice Service.

It’s difficult to quantify accuracy other than from personal experiences, but many in the industry (myself included) feel Alexa is a cut above the other assistants in understanding what users are asking for. One reason, as others have noted, is that Alexa operates like a programming command line. You provide a command to Alexa with specific parameters and variables, and it executes on it. If you deviate from that command line structure, it will simply not work. This puts some of the responsibility on the user to know how to ask a question in a format Alexa understands. But from my experience, once a user understands this back and forth, the efficiency at completing the requested task is outstanding.

There is a tradeoff here. Google and Apple’s Siri don’t have any such boundaries, as they attempt to listen and respond in a much more natural approach to language. These have more potential than the current Alexa to eventually feel much more like a human interaction. Amazon may be stifling the future of Alexa by putting in these boundaries. But for user experience right now, the command-line approach works much better.

Accurate home assistant + open platform = developer friendly

So, Alexa is open for developers to easily create apps. And Amazon has set boundaries on how its language processing works that makes it a better user experience today than other home assistants. Together, those two factors make Alexa a very friendly developer platform. Open, defined and doable.

Apple’s Siri, by comparison, is fairly unfriendly to developers. Even the new SiriKit allows for only seven different types of applications on the iPhone and iPad. And on AppleTV, Siri isn’t open at all — at least yet. Given the high failure rate of Siri to understand user requests, developers will continue to be less inclined to build products for it. This natural language failure is another limiting factor.

Google could still own the home assistant market

Google is approaching voice assistants from multiple angles — and probably has the best chance to challenge Alexa in terms of wide developer support. Announced at the recent Google I/O developer conference, the Google Home assistant is the company’s answer to Amazon Echo. Google hasn’t yet announced how much or if the platform will be available to developers for companion apps, but if its Android ecosystem is any indication, you can bet the company will encourage innovation by third parties.

From an intelligence point of view, Google has unparalleled capability with its experience in machine learning and access to data. Google also has arguably the strongest foundation to understand user intent with its Knowledge Graph. Google’s voice assistant capabilities aren’t as bound as Amazon, which means there’s more chance for failure now but more potential later as its own AI and natural language processing improves.

With all this said, Google is the only one of the three without a public API available for review, so how developer friendly the Google Home assistant will be is still very much to be determined.

‘It’s about the software, stupid’

To summarize, Amazon’s Alexa / Echo is open to developers and performs the best in terms of how it understands the user. Google is coming out with the new Google Home, while the company has probably the best data and intelligence in the tech industry, though at this point, its home platform is very undefined. Siri on iOS is starting to open up, but it’s pretty limited in terms of what you can do with third-party integrations and Apple has been very unclear what role Siri will have in the home.

All of these subplots are changing daily. But at this point, Amazon is my clear favorite to win for developers looking to create custom home assistant apps. I’m not alone. Tech publisher Tim O’Reilly wrote last week, “Amazon is a platform thinker, and so they’ve provided a neat set of affordances not just for Alexa users but for Alexa developers.” If Alexa’s a favorite of developers, then the software will follow. And if there is more software — whether we call them “apps” or “skills” — it will appeal to more consumers.

Just as the iTunes store with digital music files drove the iPod’s success, and how apps have made mobile phones indispensable and ubiquitous, and even how apps are expected to drive the growth of connected TVs, we believe the same will be true for home assistants.

As tech pundit Walt Mossberg famously wrote in a review of the very first iPad, “It’s about the software, stupid.” Put another way, developers and the apps they build may determine who wins the home assistant market.